1. The Invisible Cost of the Enter Key

Next time you generate a whimsical AI video of a “dancing steak,” remember this: you are effectively firing up a literal grill somewhere in the physical world.

We often speak of “the cloud” as if it exists in some magical, weightless dimension floating above reality. But the cloud is not “up there.” It exists in enormous industrial buildings packed with servers, transformers, cooling systems, and power infrastructure that consume staggering amounts of electricity every second of the day.

Every time you hit “Enter” on a generative AI prompt, you are not simply activating software. You are triggering a physical reaction across thousands of heat-generating processors that push electrical systems closer to their limits.

To understand the scale, consider a recent AI video-generation experiment involving roughly 1,872 clips. The process consumed an estimated 110,000 watt-hours of electricity — enough to power an average American home for roughly three and a half days.

We are now entering an era where digital curiosity carries a real-world energy price tag.

2. The AI Frenzy and the Rising Cost of Curiosity

The modern AI boom has created an unprecedented surge in computational demand.

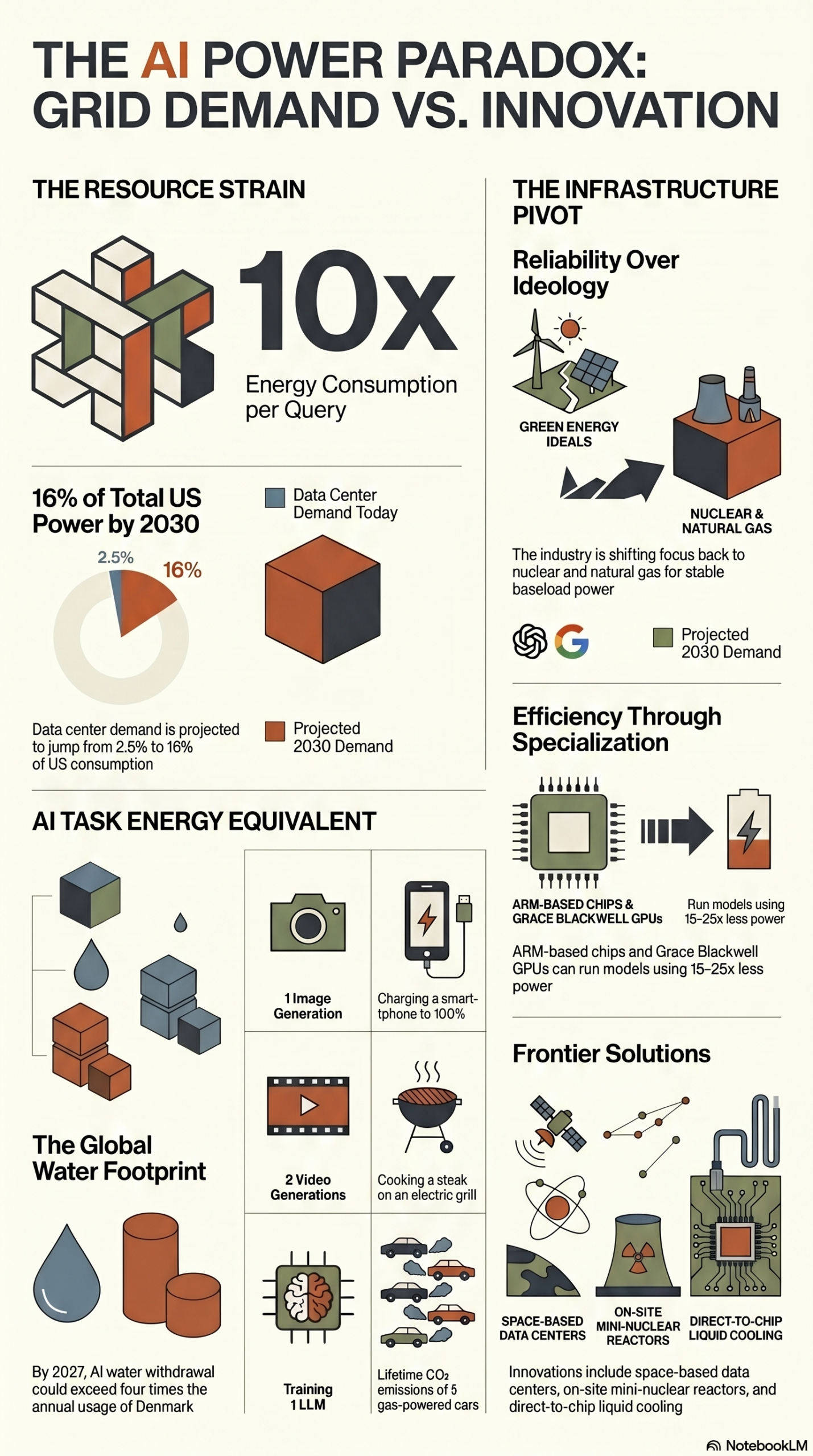

A single ChatGPT query can consume nearly ten times the electricity of a traditional Google search. In practical terms, that is roughly equivalent to running a five-watt LED light bulb continuously for an hour.

Individually, that may seem insignificant. But globally, billions of prompts, image generations, videos, and AI-assisted searches create a cumulative energy burden that rapidly scales into grid-level demand.

This is no longer just a software conversation.

It is now an infrastructure conversation.

The race for larger language models, faster image generation, and real-time AI assistants is creating one of the fastest-growing power consumption events in modern technological history.

3. The 16-Ounce Prompt: AI’s Hidden Water Addiction

Electricity dominates the headlines, but AI has another dependency that receives far less attention: water.

Modern data centers generate extraordinary amounts of heat. To prevent GPUs and processors from overheating, many facilities rely on evaporative cooling systems that consume massive quantities of fresh water.

This creates a harsh engineering tradeoff:

- Evaporative cooling is highly energy-efficient.

- But it is also extremely water-inefficient.

Research suggests that as few as 10 to 50 ChatGPT prompts may consume the equivalent of a 16-ounce bottle of water through cooling processes.

Training large-scale AI models becomes even more extreme. Estimates indicate that training GPT-3 in a typical U.S. data center directly evaporated approximately 700,000 liters of clean water.

By 2027, global AI infrastructure may consume more water annually than four times the total yearly usage of the entire country of Denmark.

That is no longer a technology issue.

That becomes a civilization-scale resource issue.

4. Data Center Alley and the Coming Resource Conflict

In Northern Virginia — often referred to as “Data Center Alley” — the pressure on local infrastructure is becoming impossible to ignore.

Some operators are now abandoning water-heavy cooling systems in favor of massive air-cooled facilities. Companies like Vantage are deploying enormous rooftop cooling units to reduce dependence on local water supplies.

But this creates a new problem:

Air cooling consumes substantially more electricity.

The industry is now trapped between two competing realities:

- Save electricity and consume more water.

- Save water and consume more electricity.

Neither option scales comfortably.

As AI demand accelerates, regions with limited water access or strained electrical infrastructure may eventually face difficult political and economic decisions about who receives priority access to resources.

5. The 2030 Power Crisis Nobody Wants to Talk About

Before ChatGPT launched in 2022, data centers represented roughly 2.5% of total U.S. electricity consumption.

That number is projected to explode.

Some forecasts suggest that by 2030, data centers could consume as much as 16% of all electricity generated in the United States — enough to power nearly two-thirds of American homes.

At the same time, America’s electrical infrastructure is aging rapidly.

The average transformer in the United States is approximately 38 years old. Utilities may require tens of billions of dollars in upgrades simply to prevent large-scale instability during peak demand periods.

Without major investment, summer blackouts may eventually become less of a possibility and more of a mathematical inevitability.

The uncomfortable question becomes:

Do we prioritize residential air conditioning during a heatwave, or do we prioritize uninterrupted AI computation?

That question sounds absurd today.

It may not sound absurd ten years from now.

6. Reliability Over Ideology: The Great Energy Pivot

For years, many major technology companies publicly emphasized renewable energy initiatives centered around wind and solar power.

But generative AI changed the equation.

AI systems require continuous, uninterrupted baseline power. Wind and solar remain important technologies, but they cannot consistently provide the stable, always-on energy density that large-scale AI infrastructure demands without major storage breakthroughs.

As a result, the conversation inside the tech industry has shifted:

The goal is no longer simply the “greenest” power.

The goal is now the most stable and reliable power possible.

This shift has produced a profound irony. Technologies once considered transitional or outdated are suddenly being revived to support AI growth.

In some regions, coal plant shutdowns have been delayed specifically to support new AI-focused data centers.

Meanwhile, companies are increasingly building self-contained power systems that bypass public infrastructure entirely, including dedicated natural gas plants built exclusively for private AI operations.

The AI race is quietly reshaping global energy priorities in real time.

7. Nuclear Power, Space-Based Data Centers, and the Future of AI Infrastructure

To sustain AI growth, technology leaders are now pursuing energy strategies that would have sounded like science fiction only a decade ago.

These include:

- Small modular nuclear reactors (SMRs)

- Experimental nuclear fusion projects

- Deep geothermal energy systems

- Massive private microgrids

- Orbit-based solar-powered data centers

The logic behind space-based infrastructure is surprisingly rational.

Space offers:

- Continuous solar exposure

- Natural cooling conditions

- No competition for land

- No freshwater requirements

- Higher solar efficiency than Earth-based systems

Some estimates suggest solar panels in orbit can generate up to six times more energy than identical panels on Earth because they operate in uninterrupted sunlight.

If reusable rocket systems continue reducing launch costs, space-based data centers may eventually become economically viable.

The cloud may literally leave Earth.

8. Efficiency as the Real Survival Strategy

If humanity cannot generate enough energy to sustain AI growth, then efficiency becomes the only realistic solution.

This is driving a major transition away from traditional X86 computing architecture toward ARM-based processors originally designed for energy-constrained mobile devices.

Efficiency is becoming the secret weapon of the AI age.

Modern AI chips like Nvidia’s Grace Blackwell architecture reportedly deliver dramatic reductions in power consumption compared to previous generations.

At the same time, companies such as Apple and Samsung are aggressively pursuing “On-Device AI,” where AI tasks are processed locally on phones and laptops instead of inside distant data centers.

Every AI request processed locally:

- Reduces electrical grid strain

- Reduces water consumption

- Reduces latency

- Reduces infrastructure expansion pressure

The future of AI may depend less on building larger systems and more on building smarter, more efficient ones.

9. Conclusion: The Civilization-Level Race

Humanity is entering a civilization-level competition for energy dominance.

AI is no longer merely software.

It is now a physical consumer of electricity, water, land, cooling systems, minerals, and industrial infrastructure on a global scale.

There is a profound irony in this moment. After decades spent promoting lower energy consumption and environmental restraint, humanity has created a technology ecosystem that may eventually require more power than anything we have ever constructed before.

The defining question of the next decade may not simply be what AI can do.

The real question may be:

What are we willing to sacrifice to keep it running?

Can innovation outpace resource exhaustion?

Or are we entering an era where computational growth fundamentally reshapes civilization itself?